If you spend enough time in the field working with real businesses trying to use AI, a pattern becomes obvious very quickly.

The question is no longer whether AI can help inside a Microsoft-heavy environment. It can. Honestly, without any caveats.

The real question is whether you are prepared to do the work required to make it reliable, repeatable, and worth defending when it matters. That is a very different question.

Microsoft owns the environment. Claude is often the tool people actually reach for when they need to think, produce, and get real work done.

A recent practitioner thread on Reddit captured a lot of what I am hearing and seeing in the market right now. People working inside Microsoft-heavy organisations keep saying the same thing.

If you are a COO or CTO, read this through an innovation lens

The play right now isn't a transformation program. It is standing up one innovation pod, testing the limits of the tech on a real workflow, building internal muscle, and then pushing what works down the enterprise scaling path. Small. Tested. Then scaled.

That is the frame the rest of this post is written in. None of what follows is an argument for deploying across the whole organisation tomorrow. It is an argument for starting now on something small enough to control, and serious enough to learn from. Every company is going to have to innovate harder on their internal processes. You do that small, and scale what works.

The firms that build this muscle early will be the ones that can scale confidently in twelve months. The firms that wait will be running the same experiments twelve months late, against staff who have already formed their own shadow habits.

The answer is yes, if you scope the job properly

Let me get the first point out of the way. Can AI deliver real value inside a Microsoft-heavy company? Yes. The reality is that it only delivers when you scope the process correctly, invest time in building the connectors and rules that reflect your actual business, and put proper evals and tests in place.

This is where a lot of firms go wrong. They roll out a horizontal tool, give everyone access, run some basic training, and hope staff figure it out in the flow of work. What usually happens instead is inconsistency. One person gets value. Another gets frustrated. A third quietly stops using it. A fourth pushes it too far and creates risk. The organisation concludes the tool is "promising but patchy".

That isn't an AI problem. It is a deployment problem. Once you get the ingredients right, scoped workflows with usable connectors, business-specific rules, validation loops, auditability, and evals, the benefits stack quickly.

That is exactly what our AI Fitness Review is designed to surface. The prerequisites required to use AI reliably, not just experimentally.

The market is moving from access to outcomes

A lot of enterprise AI conversation is still stuck in the wrong place. Too much of it is about access, licences and broad enablement. Not enough of it is about whether the tool helps people finish meaningful work.

That distinction came through clearly in the thread. The recurring theme was that Copilot often feels strongest as an access layer and retrieval layer across the Microsoft environment, but weaker when users want help with real task completion inside live workflows. Users described it as useful in some scenarios, but also prone to taking them on long, confident dead ends when they tried to solve practical problems. Claude, by contrast, was repeatedly framed as the stronger reasoning and output engine.

That is the shift we are seeing now. The market is moving from "do we have AI?" to "does it reliably help us get the job done?" It is a much healthier framing.

I use all the major modes of AI, and Claude has found a strong sweet spot

I use multiple AI tools in my own daily work. They all have strengths. This isn't a simplistic one-tool worldview.

At this moment though, Claude has found a strong sweet spot between intelligence, harness and speed of innovation. That matters. Raw model intelligence on its own isn't enough. A great model in a poor harness under-delivers. A well-integrated tool with mediocre reasoning also under-delivers. What has been impressive recently is the way Claude has been combining strong reasoning, increasingly useful workflow harnesses, and a pace of product improvement that is honestly hard to ignore.

That pace came through in the thread too. Users were not just praising the model in the abstract. They were praising Cowork, Office workflows, document handling, Claude Code, and the general sense that the product is getting better quickly.

That doesn't mean every other tool is irrelevant. Far from it. It means the combination of intelligence, usability and product velocity is starting to matter a lot more than many organisations expected.

The boring middle of work is still where the real value is

The biggest wins aren't hiding in grand one shot prompts. They are in the boring middle of work. Spreadsheets. Documents. Client packs. Reporting. Meeting preparation. Structured analysis. Internal business artefacts. The repetitive formatting and synthesis tasks that chew up your afternoon without anyone noticing.

This is where the field feedback is strongest, and where I continue to see the highest practical ROI. People do not need AI to feel magical. They need it to remove friction.

The strongest stories right now aren't "AI replaced the whole team". They are "it cut out an hour of annoying work".

It understood the workbook faster than I could explain it. It restructured this file properly. It generated the first draft in the right format. It helped me get to the answer much faster. It reduced the manual middle layer. That is the real 80 percent, and it is not small.

Excel is still the clearest "holy shit" moment for non-technical users

If I had to nominate one area where I have personally seen the most non-technical users have their "holy shit" moment, it is Excel. Not because it is perfect. Not because it requires no effort. But because when it works, the value is visceral.

Practitioners in the thread put it in plain terms. Claude "has been able to review and understand the linking formulas between the tabs and how the data flows through each part". One user described rewriting certain tabs to a better layout and projecting data forward another twelve months. Another had enabled VBA in spreadsheets that had been "causing a time suck". Another line, almost thrown away in the conversation, captured the shift more neatly than any vendor deck I have read this year.

"We're using Cowork to remove the Excel middle layer between our data and what people need from it."

That is the real job. The middle layer between your data and what the business actually needs from it. The afternoon-eating layer. That is what is being compressed.

For a lot of non-technical users, this is the first time AI stops feeling like an interesting chatbot and starts feeling like something that could materially change their job. You can almost see the mental switch happen. They stop asking "is this useful?" and start asking "how much of my work could this change?"

Excel still rewards effort though. The honest version came from another user in the same thread. Claude is "slightly less intuitive with Excel than other tasks", they said. "I need to be more specific with how I want it to process, and what I want the end result to look like." Then the kicker. "Overall it's less of my time than if I did it all manually."

That is the real signal. Not that it is effortless. That it is net-better when you wrap the opportunity properly. The answer isn't to walk away from the friction. The answer is to do the scoping work once and reap the compounding.

PowerPoint is improving fast, and claude.ai/design is worth paying attention to

PowerPoint isn't yet as mature a use case as Excel, but it is moving. The discussion showed mixed sentiment. Some people reported real gains creating or editing PowerPoint content through Claude and related workflows. Others pointed out the obvious limitation, which is that language models are better at structure, narrative and content than they are at free-form visual design control.

That is fair. There is an honorable mention here that I think more people should be watching closely though, and that is claude.ai/design. It is still early. Still imperfect. Still rough around the edges. With the right design system, a careful slide outline, and a bit of discipline, it is already starting to look like the pack assembler people have always wanted.

For me, this is version 0.01 territory. It is already useful, which is exactly why it matters. If this is what the early version looks like, you should assume it gets materially better from here.

The real work is building the blueprint for reliable deployment

This is the part most firms still underestimate. The real challenge isn't just picking a model or buying licences. It is building a blueprint for deploying AI reliably, starting small and scaling what works. That is what I focus on with clients.

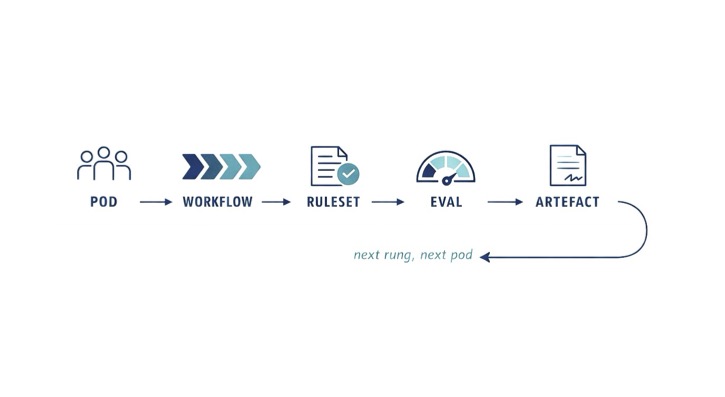

Think of it as a ladder, not a capability inventory. Rung one is a single innovation pod. One team. One workflow. One ruleset. One eval loop. One reviewable artefact. That is enough to learn from, and small enough to control.

From there you tighten the controls, repeat the pattern on the next workflow, and start building the reusable pieces as you go. Connectors into the real systems of work. Shared evals. Human-in-the-loop gates where risk requires it. A process for operationalising what works. The mistake is trying to solve the whole ladder on day one. The other mistake is climbing it without writing down what worked at each rung.

You run short cycles, learn fast, and move what proves itself into the next one. This isn't a one-and-done exercise yet. The ground is still moving. Models improve. Harnesses improve. Features improve. Interfaces improve.

That shouldn't be a reason to wait. Honestly, the opposite is true. A lot of the hard prep work you do now becomes the foundation that lets you absorb each new AI improvement faster and with less chaos. You do not need to live on the bleeding edge. You do need to stay on the leading edge, because this field is moving too quickly to stand still without consequence.

Governance isn't a brake on AI. It is part of what makes it work

One of the more important themes in the discussion was governance, security boundaries, and organisational control. That concern is real, especially in larger environments. Questions around data boundaries, subprocessors, institutional knowledge and enterprise approval are not side issues. They are central.

Many organisations still frame governance the wrong way though. They treat it as the thing you have to bolt on to keep AI safe. The reality is that governance should be treated as a first-class citizen in any serious AI deployment.

Understanding your controls, implementing auditability, building traceability, and preserving the chain of how outputs were created isn't just mandatory from a risk perspective. In many cases it actually helps the AI become better. For humans, traceability and auditability can quickly become overwhelming. For AI, that same structure often makes it stronger and more reliable. Clear inputs, visible rules, documented decisions, known reference points and feedback loops all improve performance.

So governance isn't just about control. It is also about capability.

The state of play in April 2026

If I had to summarise the field learning right now, it would be this. Yes, AI can absolutely create serious value inside Microsoft-heavy companies. Value doesn't come from access alone though. It comes from doing the hard work to make AI reliable.

Right now, Microsoft still owns the operating environment in many organisations. Claude is increasingly being seen as the intelligence layer people actually want to work with when quality of thinking, output quality and real task completion matter most. That perception came through clearly in the practitioner discussion, especially around documents, Excel, PowerPoint-adjacent workflows, Cowork and Claude Code.

The biggest opportunities today aren't vague "AI transformation" programs. They are well-scoped workflows. The firms that win from here won't be the ones that simply rolled out a generic tool first. They will be the ones that invested in the connectors, rules, evals, tests, traceability and deployment discipline required to turn raw AI into a reliable working system.

That is where the compounding starts. Once it starts, it tends to stack quickly.

If you are a COO or CTO weighing where to start, the AI Fitness Review is designed to be that first innovation-sprint diagnostic. One workflow scoped. One team chosen. Controls in place from day one. If you are a practice lead or compliance lead, the same offer surfaces the first supervised workflow you can stand behind. Either way, it is the first rung on the ladder, not the whole ladder.